09.04.2026

The EU AI Act is Officially in Effect: Time for Compliance and Action for Companies

With the European Union's new EU AI Act, a risk-based and highly reliable “Trustworthy AI” new era in artificial intelligence usage has officially begun. We detail how your organization can comply with these regulations and prepare for the 2026 timeline.

Navigation

As the world’s first comprehensive legal framework for artificial intelligence, the EU AI Act aims to ensure that AI technologies are used safely, transparently, without discrimination, and with respect for fundamental rights. Officially entering into force on August 1, 2024, and with its main obligations becoming applicable by August 2026, the law has an extraterritorial impact. All companies developing or marketing AI systems in the EU market, or whose AI systems’ outputs are used by users in the EU, are subject to this law regardless of where their headquarters are located. This makes the EU AI Act a universal set of rules, much like the GDPR, setting the industry standards for AI governance worldwide.

A New Risk-Based Architecture: From Unacceptable to Minimal

The entire structure of the law rests on one simple idea: Not all artificial intelligence is equally dangerous.

The law sorts every AI system into four different risk categories. Your compliance obligations depend entirely on which bucket your AI falls into. Here is how these four levels work:

Unacceptable Risk: AI systems in this category are strictly prohibited and cannot be used in the European Union under any circumstances. This category includes systems that perform real-time facial recognition (biometric identification) in public spaces, social scoring applications that classify citizens based on their social behavior (e.g., China’s social credit model), and AI systems that exploit psychological vulnerabilities to manipulate people’s decisions. Additionally, applications aimed at recognizing the emotions of employees in the workplace or students in educational institutions fall under this absolute ban. The strict prohibitions on these systems, which directly threaten fundamental rights and safety, officially took effect as of February 2025.

High Risk: AI systems in this category are permitted but subject to strict controls. Systems with the potential to pose serious risks to health, safety, or fundamental rights fall into this category. For example, CV screening software in HR processes, credit approval/rejection scoring by banks, product safety components like robotic surgery, and critical infrastructure management (e.g., transportation) fall directly into this scope. The use of these systems is allowed, but you must use high-quality datasets to prevent discrimination, conduct risk assessments, keep logs, and establish a robust human oversight mechanism. For most high-risk systems, these rules will fully enter into force in August 2026, and in August 2027 for certain specific systems already subject to product safety legislation.

Transparency and Limited Risk: AI systems in this category require “transparency and disclosure”. Designed to preserve human trust in AI usage, this category mandates that users must clearly know they are interacting with a machine, not a human. If your company uses chatbots in customer service or creates content with generative AI (GenAI), your primary obligation here is not to prepare complex technical documentation, but rather a direct process of disclosure and informing. Institutions providing generative AI must ensure that all generated content is identifiable as being in an AI format. Especially if you publish texts intended to inform the public or produce manipulated audio and video content such as deepfakes, you must label these outputs clearly and visibly. These transparency and labeling rules, which guarantee users make informed decisions, will fully enter into force as of August 2026.

Minimal Risk: There are no mandatory rules for AI systems in this category. The vast majority of AI systems currently used in the European Union fall into this category, and the Regulation does not impose any binding rules or additional obligations on these systems. Most everyday consumer-facing tools, such as spam filters, AI-enabled video games, and music recommendation algorithms, are in this group. Although it is not mandatory to prepare a formal legal compliance program for companies using such systems, compromising on high quality and transparency standards is never a winning approach.

The Corporate Blind Spot: The "Shadow AI" Nightmare

Knowing the risks is only the theoretical part of the job. Most corporate companies jump straight to controls and ask, “What do we need to document?”. However, the real question that needs to be asked is: Do we actually know which AI tools are currently running in our company?.

Applications used by employees to speed up their work, independently of IT or Legal departments, cause “Shadow AI” to spread uncontrollably within the company. Before you even realize it, your critical data begins to be processed by unclassified systems. Remember; you cannot manage or legally comply with a system you haven’t mapped.

Right at this point, SKYMOD prevents employees from turning to insecure and unmonitored external AI tools. SkyStudio platform, institutions can completely eliminate the risk of shadow AI by gathering all AI workflows in a single secure ecosystem that is encrypted, has defined access permissions, and where every step is auditable.

Sanctions Are Not Just Theoretical

Many companies may view these new rules as an administrative process to be dealt with over time, much like during the GDPR era, but the EU AI Act’s sanctions include some of the heaviest penalties in regulatory history. Since these penalties are proportional to revenue, they are not budgets that medium and large-scale companies can ignore and risk:

Use of Prohibited Systems: Administrative fines of up to 7% of the company’s global annual turnover or €35 million.

Violation of High-Risk Rules: Penalties up to 3% of global annual turnover or €15 million.

Providing False Information to Authorities: Penalties up to 1.5% of annual turnover or €7.5 million.

What Should Companies Do in This Process? A 3-Step Action Plan

You can immediately start your legal compliance preparations with these three fundamental steps:

Create Your AI Inventory: Map all AI tools your company uses or builds. Focus especially on HR, Finance, and customer-facing systems, and ask the question: “Does this AI make decisions that affect people?”.

Classify Risks: Classify the inventory you created according to the law’s four-level risk framework and the Annex III document listing high-risk categories.

Establish Control Mechanisms and Train Staff: Build technical documentation, audit logs, and a robust human oversight mechanism for your high-risk systems. At the same time, train your staff under the “AI literacy” requirement that became mandatory in February 2025.

Solution and Action: Comprehensive Regulatory Compliance and Sovereign AI with SKYMOD

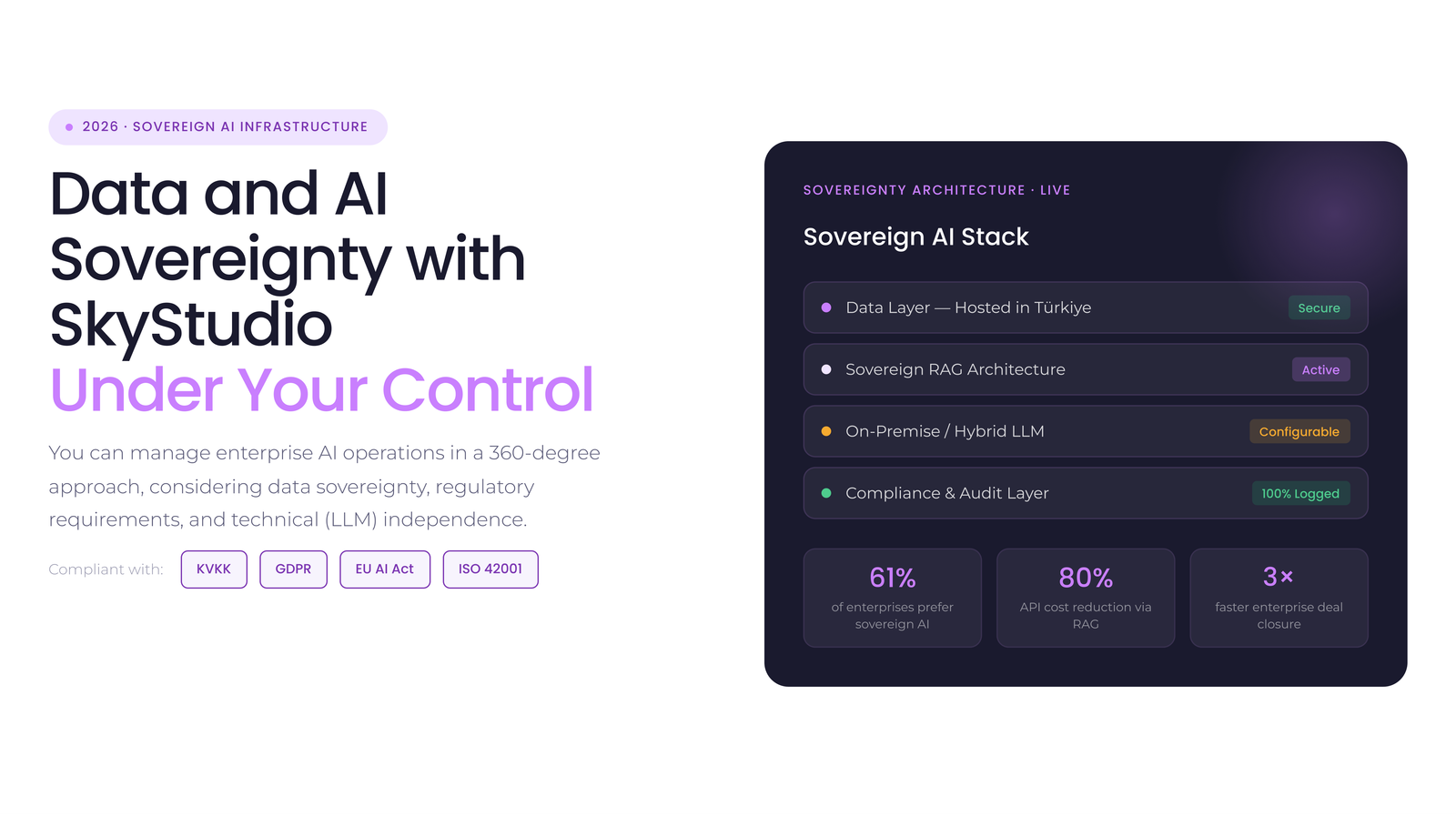

To overcome the increasing regulatory burden and solve the “Shadow AI” problem, institutions must not ban artificial intelligence, but safely internalize it. Exactly at this point, SKYMOD’s SkyStudio platform and the Goat enterprise language model step in, offering companies “compliance-by-design”.

SKYMOD guarantees corporate regulatory compliance as follows:

Data Privacy and KVKK/GDPR Compliance: The “Sovereign AI” ecosystem running on on-premise or virtual private cloud (VPC) infrastructures prevents your data from going to public clouds. This ensures full compliance with KVKK and GDPR cross-border data transfer restrictions while achieving data security at ISO 42001, ISO 27001, SOC2 and GDPR.

Traceability and EU AI Act High-Risk Compliance: The EU AI Act requires operations to be traceable and logged for high-risk systems. SkyStudio directly meets these legal documentation and accountability requirements with role-based access control (RBAC), end-to-end encryption, and automatic audit logs.

Human Oversight: The law dictates that decisions must not be left entirely to autonomous machines. SkyStudio‘s workflow automation allows you to establish mechanisms requiring “human approval” in high-risk operations, aligning with the human oversight rule in provision of the law at the technical infrastructure level.

Thus, your company can confidently focus on the speed, efficiency, and innovation that artificial intelligence will bring to your business without getting stuck on complex legal compliance barriers.

Request a demo now for AI employees like you.

Contact us to access your free demo.